The Greatest Threat We Face From AI—and What We Can Do

Here’s a list of things that have really happened with artificial intelligence (AI), in order of increasing severity.When we get to the end of the list, we will see that it is like beads connected by a string—revealing the most dangerous threat:

● When IBM Watson ultimately beat two human contestants in the quiz show Jeopardy, a curious thing happened. A human buzzed in on a question first and gave a wrong response. After quizmaster Alex Trebek ruled the response incorrect, IBM Watson buzzed in and stupidly gave the exact same wrong answer.1

● In one experiment, deep learning AI was taught to detect wolves. Closer inspection showed that the AI was not focusing on the features of the wolves used to train the neural network but on the snow in the background of each picture. Similarly, military aerial reconnaissance AI was taught to detect the presence or absence of camouflaged tanks. The pictures of camouflaged tanks were taken on cloudy days and the pictures with no tanks were taken on sunny days. The AI learned “sunny” versus “cloudy” instead of “no tanks” versus “tanks.”2

● The “frozen robot syndrome” occurs when AI is confronted with circumstances not considered or encountered before. Uninformed self-driving cars can be confused by leaves, plastic bags or seagulls.

● Google wrote an image recognition algorithm that accidentally turned out to be racist. Google’s algorithm classified a picture of a black woman as a gorilla.

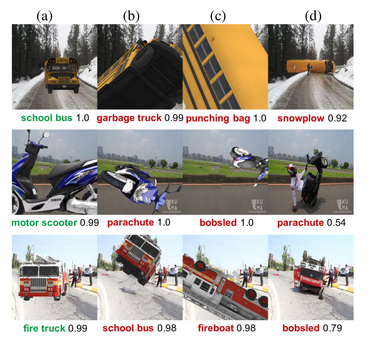

● An Uber self-driving car killed a pedestrian in 2018: “According to data obtained from the self-driving system, the system first registered radar and LIDAR observations of the pedestrian about six seconds before impact, when the vehicle was traveling at 43 mph… As the vehicle and pedestrian paths converged, the self-driving system software classified the pedestrian as an unknown object, as a vehicle and then as a bicycle with varying expectations of future travel path. At 1.3 seconds before impact, the self-driving system determined that an emergency braking maneuver was needed to mitigate a collision.” By then, it was too late. (See below for machine learning guesses in one study.)

● During the Cold War, the Soviet Union constructed Oko, a system tasked with early detection of a missile attack from the United States.3 Oko detected such an attack on September 26, 1983. Sirens blared and the system declared that an immediate Soviet retaliatory strike was mandatory. A Soviet officer in charge felt that something was not right and did not launch the retaliatory strike. His decision was the right one. Oko had mistakenly interpreted sun reflections off of clouds as inbound American missiles. By making this decision, the Soviet officer, Lt. Col. Stanislov Petrov, saved the world from thermonuclear war.

The common theme in each of these stories is the unintended contingencies of the AI system. For example, the HAL computer in 2001 A Space Odyssey did not go rogue due to AI becoming self-aware or conscious. Rather, HAL was programmed to prioritize the goal of the mission over the lives of the resident astronauts. This unintended consequence of HAL’s programming fueled Arthur C. Clarkes’ story of the conflict between the computer and the astronauts.

In the real world, a system can be fixed to avoid a repeat occurrence of unintended contingencies but these fixes are not always optimum. Google solved its accidently racist algorithm with a patch that prevented any image being classified as a gorilla, chimpanzee, or monkey. The ban included pictures of the primates themselves.

The cost of discovering an unexpected contingency can, however, be devastating. A human life or a thermonuclear war is too high a price to pay for such information. And even after a specific problem is fixed, additional unintended contingencies can continue to occur.

There are thee ways to minimize unintended consequences: (1) use systems with low complexity, (2) employ programmers with elevated domain expertise, and (3) testing. Real world testing can expose many unintended consequences but hopefully without harming anyone.

For AI systems, low complexity means narrow AI. AI thus far, when reduced to commercial practice, has been relatively narrow. As the conjunctive complexity of a system grows linearly, the number of contingencies grows exponentially. Domain expertise can anticipate many of these contingencies and minimize those unintended. But, as the above list illustrates, even the best of programmers sometimes misses.

Thus, the greatest threat is the unintended contingency, the thing that never occurred to the programmer.

Notes:

1 Smith, Gary. The AI Delusion.. Oxford University Press, 2018.

2 Note: This story has been disputed.

3 Scharre, Paul. Army of None: Autonomous Weapons and the Future of War. WW Norton & Company, 2018.

Further reading:

Why did Watson think Toronto was in the USA? How that happened tells us a lot about what AI can and can’t do, to this day

What you see that the machine doesn’t. You see the “skeleton” of an idea.

How algorithms can seem racist. Machines don’t think. They work with piles of “data” from many sources. What could go wrong? Good thing someone asked…

and

Are self-driving cars really safer…? A former Uber executive says no. Before we throw away the Driver’s Handbook… (Brendan Dixon)